WARNING: Minority rule is possible

Detecting minority rule in PoS networks, or "On setting a default"

DAO security

Decentralized autonomous organizations (DAOs) are software cryptographically bound to obey the democratic wishes of their token holders.1

In the context of DAOs, security looks like a voting problem. Whoever controls the voting power controls the DAO.

If smart contracts are self-funding bug bounties, DAOs are games of Nomic made interesting.

DAO DAO

I’ve been working on DAO DAO. We’re a DAO that builds a tool that helps people build DAOs.

One of our primary goals is to set good defaults. An energy-efficient blockchain, for example. We also want to help users build robust institutions. Part of that—a small but essential part of that—is producing voting systems that can resist gaming. We hope to “catch” governance systems prone to manipulation and guide users to make them more robust.2

One prime vector for manipulation: minority rule. If a minority can override a majority, that minority can “escalate its privilege:” it can change the rules of voting to exclude its opponents, slowly shrinking its circle until the voting system itself can be crushed in the palm of the ruling party’s hands.3

Minority rule in DAOs: How much is too much?

In DAOs, minority rule is a constant. This a consequence of traferrable governance tokens. If everyone has the same number of tokens, voting weight is equal. But, as soon as someone gives transfers a governance token to someone else, voting weight becomes unequal, and minority rule (albeit a slim kind) can emerge.

How unequal is too unequal? We accept that corporations can give unequal voting weight while still being “fair.” Worker-owned cooperatives, for example, may provide more voting weight to workers who have had a longer tenure at the organization. On the other hand, there are corporations like Facebook, in which the leader has so much control that no one else’s vote matters.

Exactly how much centralization of voting power is too much?

This is the question Zeke Medley and I encountered while trying to build a warning message. (You can see our first crack at this warning message above). Our goal was to show a message during DAO creation, should the designer set up a DAO in a way where distorted voting.

At what threshold should this warning appear?

A look at today’s DAOs

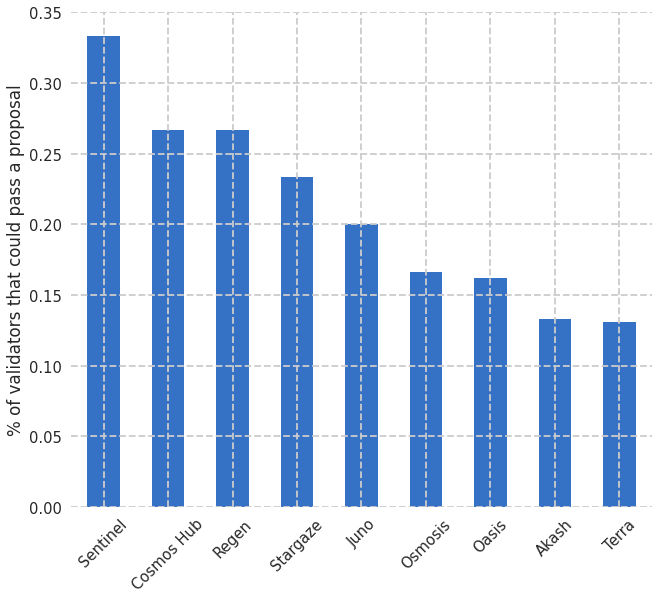

One way of answering this question: in currently existing DAOs, what is the smallest proportion of validators that could ram through an unpopular proposal?

The answer turned out to be about 20%. (Source code here).4 In the average DAO, 20% of the voting weight can (if they so choose) pass a proposal that the remaining 80% of the voting weight opposes.

See the Pareto principle. It’s no surprise that 20% of the validators share 80% of the market. The question is: should they?

Remember: We can make this market work however we want. We can enforce competition as we see fit. But only when the vote respects “our” wishes. If those powerful validators become powerful enough, they’ll be the only ones making the rules.

Setting a default

What’s the right threshold for warning users?

We need some threshold—some percentage—beyond which our warning, above, should appear.

Normatively—and for now, during the earliest days of DAOs—20% seems to be the central point between “high” and “low.” Is this central point a good one? Is it perhaps too low—should we push users to be more aggressive in decentralizing voting power?

I’d be curious to hear your thoughts on this question. I don’t know the answer. I do know that it matters a lot what we set. This number will calcify, becoming a Schelling point for all future DAOs built with the tool. I wouldn’t be surprised to see most DAOs cluster around the number we pick!

What do you think?

For now, we can pick a normative number based on currently existing DAOs.

But how should we change the number as new DAOs emerge? Should we figure out a way to invent some threshold number prescriptively?

You can respond to this email.

Acknowledgments

Zeke Medley, @chimpdaiz, and in memory of Doug Tygar, who taught me just about everything I knew about cryptography.

I’m a researcher at the UC Berkeley Center for Long-Term Cybersecurity where I direct the Daylight Lab. This newsletter is my work as I do it: more than half-baked, less than peer-reviewed.

Compared to traditional systems, DAOs let cryptography do a lot more of the work. The attack surface area has mostly to do with keeping people in charge of their keys.

Of course, getting people to manage keys is a herculean usable security task, one understood as early as Whitten and Tygar, 1999. You might argue it’s the “classic problem” in usable security. At its best, web3 is a chance to get functional key management right.

I think “wallets”—at their core, a UX metaphor for a keypair—may finally help people secure a secret key. There’s still a lot of design work to be done on them. But they could be the most lasting contribution of this “web3” era: bringing usable PKI to the masses. (Not just today’s internet users, but tomorrow’s, most of whom live in the global South).

Remember, we’re not trying to create an objective measure by which malfeasance is impossible. We’re trying to warn users about implications they may not have anticipated. The warning message is for people who want to do things well. How users might circumvent our warning to lull others into a distorted DAO is an interesting question, but one for another time.

This point should be intuitive to Americans.

Let’s compare this graph with the HHI measurements from last time:

There’s not much relationship between (r(8) = -0.08). Why? One possible reason is that HHI “likes” when more people compete, whereas the minority rule quotient is agnostic to the number of participants. I’m open to other explanations or ideas of when you might want to use one over the other.